Reality of Modern Data Architecture

We tried Data Warehouses. We built Data Lakes. Both failed to solve the complete puzzle.

Is the Lakehouse the unified future we were promised, or just another buzzword?

If you work with data today, you are likely feeling overwhelmed. The landscape is messy. We are generating petabytes of information, structured logs, unstructured text, images, real-time IoT streams, and the pressure to turn that raw noise into actionable signal (and increasingly, AI models) has never been higher.

For the past decade, data teams have been caught in a tug-of-war between two dominant architectural patterns: the rigid reliability of the Data Warehouse and the chaotic flexibility of the Data Lake.

Neither is perfect. Both create silos.

Enter the Data Lakehouse.

It is the hottest term in data engineering right now, promising to unify our fractured infrastructure. But what is it really? Why does it matter? And most importantly, what is the ground-level reality of adopting it?

Today, we are cutting through the hype to explore the architecture designed to clean up the mess

How We Got Here

The tale of Two Silos.

To understand the Lakehouse, we first need to understand the problem it solves. Historically, organizations have maintained two separate, disconnected systems for managing data.

1. The Data Warehouse (The Library)

What it is?

A highly structured database optimized for fast queries and business intelligence (BI) reporting. Think of it like a meticulously organized library.

The Good

Fast and reliable

Clean data with schema-on-write

Supports ACID transactions and strong data integrity

The Bad

Expensive and rigid

Poor fit for unstructured data like video or text

Struggles to scale for modern machine learning workloads

2. The Data Lake (The Dumping Ground)

What it is

A vast repository built on cheap cloud object storage, such as AWS S3 or Azure Blob, that holds raw data in its native format.

The Good

Cheap and infinitely scalable

Extremely flexible with schema-on-read

Ideal for exploratory analysis and data science

The Bad

Often turns into a “data swamp”

Weak governance and data quality controls

Poor performance for reliable BI reporting

Core Problem

Because BI & other Apps teams needed Warehouses and AI teams needed Lakes, companies ended up running both. This required complex and brittle ETL pipelines to constantly move and duplicate data between systems.

The result

Higher infrastructure costs

Inconsistent data definitions

Data engineering teams constantly fighting fires

Enter the Data Lakehouse

The best of both the worlds?

The Data Lakehouse is an architectural paradigm designed to eliminate this two-system dilemma.

Definition

A Data Lakehouse combines the low-cost, scalable storage of a Data Lake with the structured management, ACID transactions, and performance features of a Data Warehouse, all in a single platform.

How does it work?

It adds a smart metadata layer on top of raw cloud object storage.

Imagine a messy data lake. Now imagine laying a sophisticated, invisible grid over it that:

Catalogs where everything lives

Enforces rules on how data is written

Creates indexes that make querying fast and reliable

This metadata layer, powered by open table formats like Delta Lake, Apache Iceberg, or Apache Hudi, allows raw files in object storage to behave like structured database tables.

Why the Lakehouse Matters (The Ideal)

If implemented correctly, the Lakehouse solves several fundamental business and technical challenges.

Unification of BI and AI

This is the biggest win. Data analysts using SQL for dashboards and data scientists using Python or R for machine learning can finally work from the same data source.

No more debates about which system has the “correct” data.

ACID Transactions on Raw Data

In traditional data lakes, a failed pipeline could leave corrupted or partial data behind. Lakehouses bring warehouse-grade reliability to object storage.

You can safely:

Insert

Update

Delete

Roll back changes

Governance and Security

Instead of securing two separate platforms, the Lakehouse provides a unified control plane for:

Access policies

Auditing

Data lineage

This applies across all data types, structured and unstructured.

Cost Efficiency

By storing data in low-cost object storage and only paying for compute when queries run, organizations avoid the massive expense of keeping petabytes of data in proprietary warehouse systems.

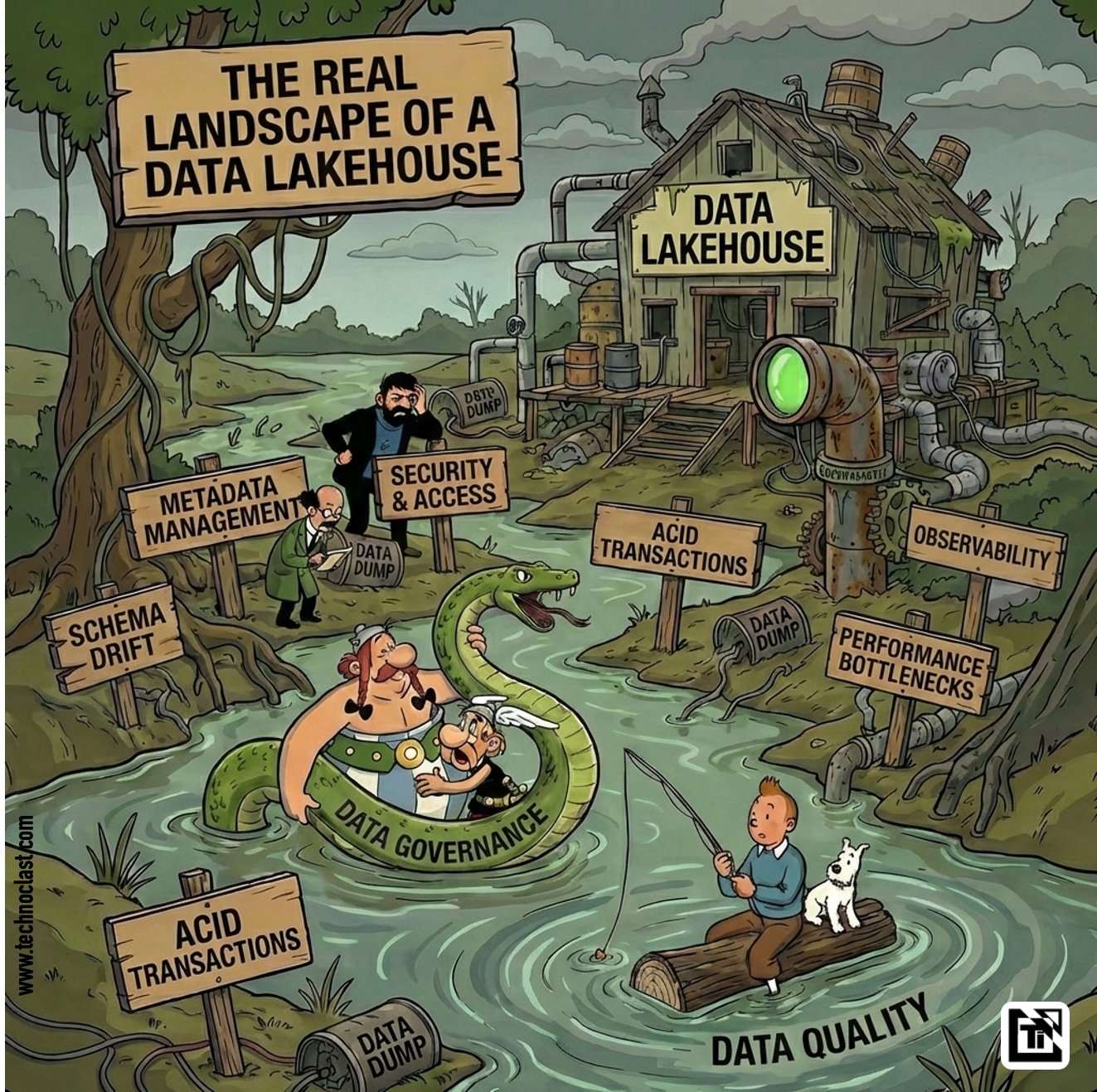

Now, The Reality Check

The Messy Truth

If the Data Lakehouse is so powerful, why is adoption still hard? Because the reality on the ground is messier than the marketing.

1. It Is Still Relatively Immature

Data Warehouses have benefited from over 30 years of refinement. The Lakehouse, as we know it today, is barely a decade old. Tools are evolving quickly, and best practices are still emerging.

2. The Complexity Shift

The Lakehouse removes architectural silos but shifts complexity to engineering.

Teams must manage:

Metadata layers

File compaction and sizing

Table maintenance tasks like vacuuming old files

You are not buying a finished product. You are building a platform.

3. Performance Requires Active Tuning

Warehouses are optimized for fast SQL queries out of the box. Lakehouses can match that performance, but only with proper tuning.

This includes:

Smart partitioning

File ordering strategies

Index and metadata optimization

Treat a Lakehouse like a lazy data lake, and dashboards will suffer.

4. The Open Format War

There is no single standard for the metadata layer. Teams must choose between:

Delta Lake

Apache Iceberg

Apache Hudi

Interoperability is improving, but choosing a format still feels like a high-stakes bet on the future.

The Path Forward

The Data Lakehouse is not a magic wand. It will not fix poor data quality or a broken data culture overnight.

But it is the most logical evolution of modern data architecture.

The demands of AI, real-time analytics, and scale make disconnected data silos unsustainable. While implementing a Lakehouse requires effort and a mindset shift, the destination matters.

A unified, governed, and scalable data platform is no longer optional.

The landscape is still messy, but for the first time, we have a real blueprint for cleaning it up.

What’s your experience?

Are you building a Lakehouse, sticking with a Warehouse, or drowning in a Data Swamp?

Let me know in the comments.